Kafka Extended APIs for Developers

Consumers and Producers are considered low level, and since their introduction other higher level APIs have been created, specifically:

-

Kafka Brokers do NOT verify the messages they receive

-

Kafka takes bytes as input with out loading them into memory (zero copy)

-

Schema Registry then is a separate component

- Producers and Consumers need to be able to talk to it

- The Schema Registry must be able to reject bad data before sent to Kafka

- Supports Avro, Protobuf and JSON

Gotchas:

- Needs to be highly available

- Schema formats have a learning curve (Avro, etc.)

You can also evolve schemas over time. The same problems you experience in changing any contract apply. Additions are backwards compatible, modifications or deletions are not.

Schema changes are versioned (v1, v2, etc.)

You can also assign default values for new properties as the schemas evolve.

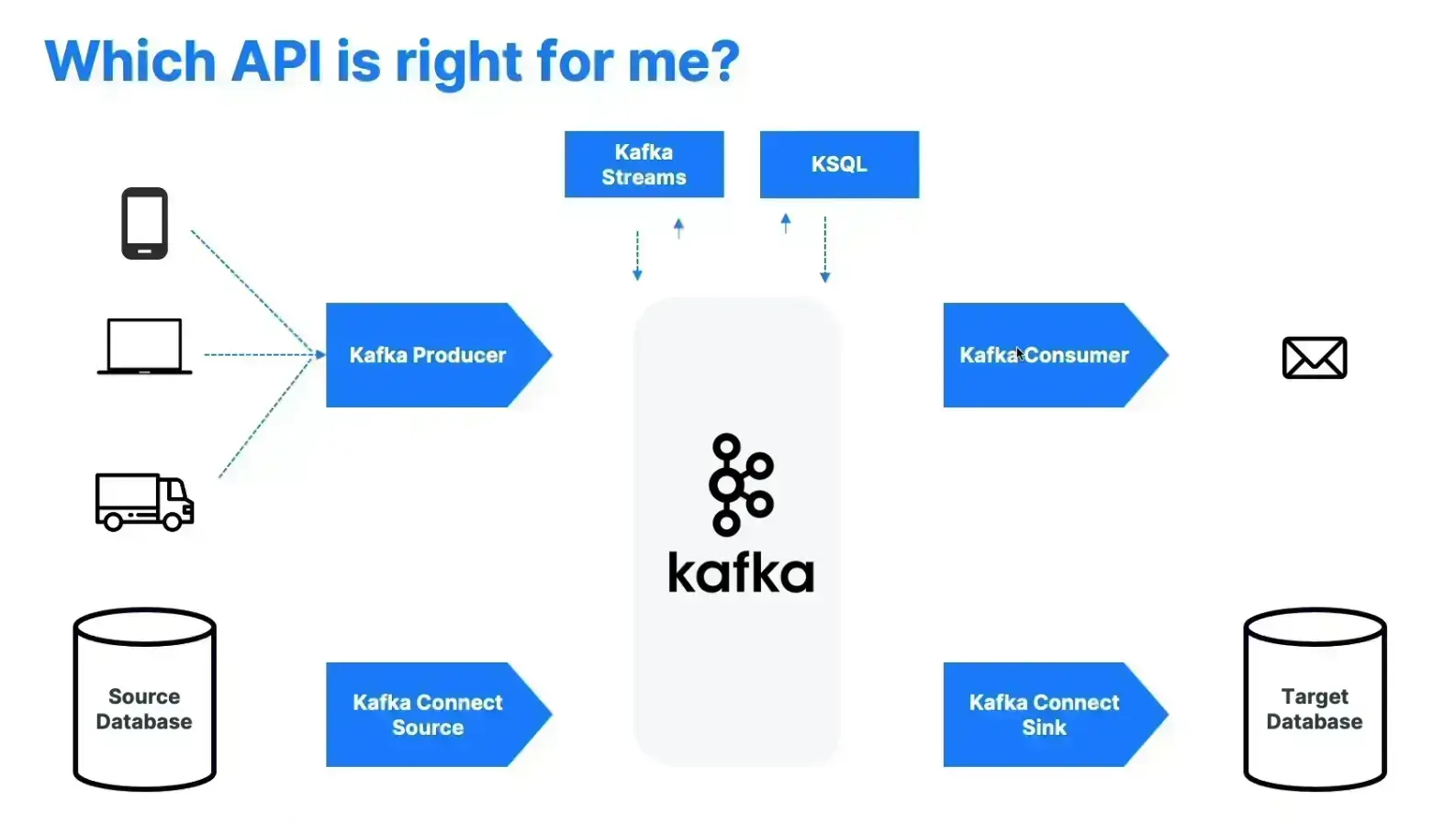

- Known data source use kafka connect source or sink depending on data flow

- Use Producer to collect directly from the source

- Consumer for producing ephemeral messages

- Kafka Streams for ETL back into Kafka

- KSQL for queries on Kafka data